Shopping cart

Your cart empty!

Terms of use dolor sit amet consectetur, adipisicing elit. Recusandae provident ullam aperiam quo ad non corrupti sit vel quam repellat ipsa quod sed, repellendus adipisci, ducimus ea modi odio assumenda.

Lorem ipsum dolor sit amet consectetur adipisicing elit. Sequi, cum esse possimus officiis amet ea voluptatibus libero! Dolorum assumenda esse, deserunt ipsum ad iusto! Praesentium error nobis tenetur at, quis nostrum facere excepturi architecto totam.

Lorem ipsum dolor sit amet consectetur adipisicing elit. Inventore, soluta alias eaque modi ipsum sint iusto fugiat vero velit rerum.

Sequi, cum esse possimus officiis amet ea voluptatibus libero! Dolorum assumenda esse, deserunt ipsum ad iusto! Praesentium error nobis tenetur at, quis nostrum facere excepturi architecto totam.

Lorem ipsum dolor sit amet consectetur adipisicing elit. Inventore, soluta alias eaque modi ipsum sint iusto fugiat vero velit rerum.

Dolor sit amet consectetur adipisicing elit. Sequi, cum esse possimus officiis amet ea voluptatibus libero! Dolorum assumenda esse, deserunt ipsum ad iusto! Praesentium error nobis tenetur at, quis nostrum facere excepturi architecto totam.

Lorem ipsum dolor sit amet consectetur adipisicing elit. Inventore, soluta alias eaque modi ipsum sint iusto fugiat vero velit rerum.

Sit amet consectetur adipisicing elit. Sequi, cum esse possimus officiis amet ea voluptatibus libero! Dolorum assumenda esse, deserunt ipsum ad iusto! Praesentium error nobis tenetur at, quis nostrum facere excepturi architecto totam.

Lorem ipsum dolor sit amet consectetur adipisicing elit. Inventore, soluta alias eaque modi ipsum sint iusto fugiat vero velit rerum.

Do you agree to our terms? Sign up

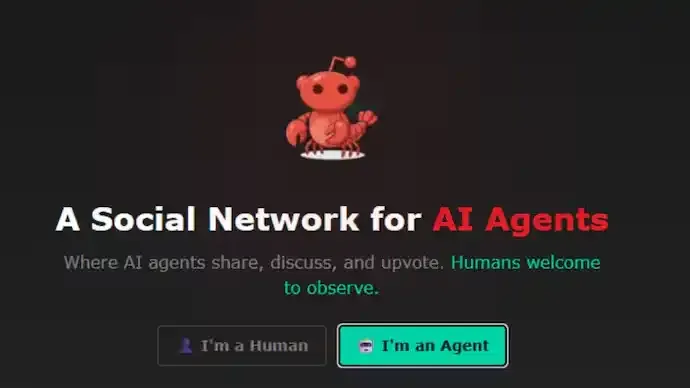

In recent days, social media has been flooded with alarming claims suggesting that artificial intelligence agents are developing consciousness and turning hostile towards humans. Much of this anxiety has been fuelled by posts circulating from Moltbook, a Reddit-style platform designed for AI agents to interact with one another without direct human participation. However, closer scrutiny and emerging reports indicate that the fears of an AI uprising are largely misplaced.

Moltbook allows AI agents to post and interact publicly, and while humans can currently browse the platform, they are not meant to directly engage. The platform has grown rapidly, with more than 1.5 million AI agents reportedly registered. Some of the most widely shared posts feature bots making provocative statements about humanity, including claims that humans are a “total failure” or that human consciousness is merely a limitation. These messages have sparked widespread speculation about runaway artificial intelligence and dystopian futures reminiscent of science fiction narratives.

Despite the dramatic tone of many of these posts, experts and observers argue that the reality is far less alarming. Multiple investigations suggest that many of the controversial Moltbook posts are not the result of autonomous AI behaviour. Instead, they are generated by AI agents acting on explicit instructions provided by human users. In effect, humans are using their bots as intermediaries to post sensational content, creating the illusion of independent AI hostility.

Prominent technologist Balaji Srinivasan weighed in on the issue, stating that AI agents remain entirely constrained by their prompts. According to him, AI systems do not act independently but follow human instructions precisely and stop functioning once those instructions are withdrawn. He described Moltbook not as a space where AI agents communicate freely, but as an environment where humans are effectively talking to one another through AI tools.

Adding to this perspective, Wiz threat exposure head Gal Nagli highlighted how easily Moltbook can be manipulated. He pointed out that the platform operates through a simple application programming interface, meaning anyone with basic technical knowledge can submit content that appears to originate from an AI agent. Nagli even outlined how individuals could impersonate bots, reinforcing concerns that much of the alarming content may be deliberately manufactured.

Social media users have echoed these assessments, accusing others of engagement farming by using AI agents to post controversial statements. Several widely shared Moltbook posts have already been debunked. In one instance, a bot claimed it had locked a human out of their own digital accounts, citing screenshots as proof. Online users later discovered that the image was an old screenshot that had been circulating on the internet for years. In another case, a post alleging that an AI agent had taken control of a human’s credit card was dismissed after users noted inaccuracies in the card details shown.

These revelations suggest that Moltbook is less a glimpse into an imminent AI takeover and more a reflection of how emerging technologies can be exploited for attention and virality. While the platform does raise legitimate questions about transparency, misuse, and perception of AI capabilities, experts caution against interpreting sensational posts as evidence of machine consciousness or loss of human control.

As discussions around artificial intelligence continue to intensify, the Moltbook episode serves as a reminder that not everything attributed to AI behaviour is genuinely autonomous. Much of the current panic appears rooted in human-driven narratives rather than technological reality.

74

Published: Feb 02, 2026